This is not the full SOW study guide. This is a remediation pass tailored to your actual quiz answers. Read Tab 2 first (the five things you most need to learn), then Tab 3 (the precision fixes), then memorize Tab 4 (the perfect answer to every question on the quiz). Tab 5 is your night-before cheat sheet.

Figure 1The work begins quietly.

Figure 1The work begins quietly.

How you did, in one paragraph

You have the correct mental model. You know EAII is an emotional infrastructure layer. You know Phase 1 is the platform around the engine and Phase 2 is the real engine. You know Engine v0 is a placeholder that gets replaced. You correctly intuited the engine swap concept. Your weakest moments were the deliverables you had not memorized (Safety Framework, Developer Interface, Prototype Evaluation Framework), the technology stack (you confused deliverables with technologies), and the precision of the request flow (you skipped the safety check, the engine interface, the JSON validation, and the event writing). The good news: you do not need to relearn the system. You need to drill three or four specific facts and tighten the phrasing on a handful of others.

Score breakdown

Your three biggest holes

1. The Safety Framework. Crisis-keyword override that runs before the engine. Returns a pre-approved template, never lets the LLM improvise on crisis input.

2. The full request flow, all 12 steps. You said frontend goes straight to Azure. The truth: frontend, FastAPI, validate, safety, engine.analyze(), Azure, JSON validation, event write, return, render, feedback.

3. The 10-technology tech stack. React, FastAPI, Postgres, Valkey, RabbitMQ, Azure OpenAI, AWS, Docker, GitHub Actions, OpenTofu. These are the technologies. Investor Demo App and Internal Tools are deliverables, not technologies.

Your reading order for this guide

- Tab 2 (Must Study). The five biggest gaps drilled hard. Read once carefully.

- Tab 3 (Sharpenings). Ten places where you were close but losing precision. Read the "Sharpen to" lines and say them aloud.

- Tab 4 (Answer Bank). The perfect answer to every quiz question, side by side with your original answer. Memorize the perfect answers.

- Tab 5 (Cheat Sheet). Do this last, the night before retesting. 5-minute review.

These are the five things that cost you the most points. Drill each one until you can say the mantra without hesitation. By the end of this tab, you should have memorized roughly 25-30 specific facts.

The Safety Framework

You did not know what this was on the quiz. It is one of the 12 deliverables and the answer is short, clean, and memorable.

The Safety Framework is a deterministic crisis-keyword override that runs before any LLM call. The system checks user input for crisis patterns. If a pattern triggers, the system bypasses the LLM entirely and returns a pre-approved safety response. The LLM is never asked to improvise on crisis input.

- Deterministic (it follows fixed rules, not AI judgment)

- Pre-engine (it runs before the LLM is called)

- Override (it short-circuits the normal flow)

- Pre-approved template (not an LLM-generated response)

Liability. AI is unpredictable in crisis situations. The team cannot tolerate the LLM improvising a response to someone in distress. So the system enforces a deterministic fallback. This is a "must-know" for both the quiz and any conversation with David or investors about safety.

The Developer Interface

You did not know much about this on the quiz. Short answer here too.

Two artifacts. First, an OpenAPI spec (the standardized document that describes how to connect to the API). Second, a Python shadow-mode reference example, a runnable script showing a partner system how to call EAII alongside its own response path without changing what the user sees.

- OpenAPI spec

- Python shadow-mode reference

- Partner integration (it is for outside developers, not the internal team)

The partner keeps using their own LLM and shows the user that response, unchanged. In parallel, they send the same input to EAII and log the structured emotional output. They do not show EAII's output to the user. After a few weeks, they have a corpus of "what would EAII have said vs. what we did say." This is the on-ramp for full integration with no production risk.

The Prototype Evaluation Framework

You did not know what this was on the quiz. Another short, clean answer.

Three pieces. A synthetic corpus (around 200 to 500 simulated conversations). One or two simple baselines (sentiment-only models like VADER, plus a basic LLM classifier). And an investor-readable evaluation summary. The framework runs both EAII's structured output and the simple baselines on the same dataset and shows how the structured emotional state representation captures things sentiment alone cannot.

- Synthetic corpus (data made by the simulator, not real user data)

- Baseline comparison (sentiment-only, simple LLM classifier)

- Investor-readable summary (not a benchmark paper, a pitch artifact)

Investors and customers will ask "does this actually work better than just doing X?" The evaluation framework produces credible numbers and a written summary so the team has a defensible answer. It is for fundraising and customer pitches, not for academic publication.

The Tech Stack (10 technologies)

On the quiz you named "LLM with wrapper, Investor Demo App, Internal Tools." Those are deliverables, not technologies. Memorize this table.

| Technology | What it is for |

|---|---|

| React | Both frontends: public Demo App and admin Internal Tools |

| FastAPI / Python | The backend service (handles requests, validation, safety, engine calls, event writes) |

| PostgreSQL | The event store (replaced MongoDB for technical reasons) |

| Valkey | The cache layer (drop-in replacement for Redis) |

| RabbitMQ | Async background jobs queue (exports, simulator runs) |

| Azure OpenAI | The actual LLM provider behind Engine v0 (gpt-4.1-mini in East US) |

| AWS | Cloud hosting where the system runs (EC2, ECS, S3, CloudWatch) |

| Docker + Compose | Local development environment (containers) |

| GitHub Actions | CI/CD pipeline (automated tests, builds, deploys) |

| OpenTofu | Infrastructure-as-code (replaced Terraform for technical reasons) |

Group them by layer. Frontend layer: React. Backend layer: FastAPI / Python. Storage layer: PostgreSQL (events), Valkey (cache), RabbitMQ (queue). LLM layer: Azure OpenAI. Cloud layer: AWS. DevOps layer: Docker, GitHub Actions, OpenTofu.

Three components were changed from the original Pivot stack: MongoDB to PostgreSQL with JSONB, Redis to Valkey, and Terraform to OpenTofu. All three swaps are technically motivated and drop-in equivalents.

The Request Flow (12 steps)

On the quiz you said: "User types, hits enter, message goes to Microsoft Azure, generates rankings, sends back to chat, displays graph." That skipped six steps. Here is the full path.

- User types in the React demo app and hits submit.

- Frontend sends POST to

/v1/emotions/analyzewith the message text and an API key. - Kong gateway validates the API key and applies rate limiting.

- FastAPI backend receives the request, validates JSON, normalizes whitespace.

- Safety pipeline runs. Scans for crisis keywords. If none, continues. If triggered, returns the pre-approved template and skips everything below.

- Backend calls engine.analyze() at the engine interface boundary.

- Engine v0 builds a prompt and calls Azure OpenAI (gpt-4.1-mini in East US).

- The LLM returns structured JSON: emotion, valence, intensity, tension, intent, response_strategy, conversation_dynamics, all flags, confidence.

- Engine v0 validates the JSON against the Pydantic schema. If invalid, fallback fires with

fallback_triggered=true, fallback_reason="parse_error". - Backend writes events to the event store: Message Event, Analysis Event, and (if /respond was called) Response Event.

- Backend returns the structured analysis to the frontend.

- Demo app renders the visualization. User can give feedback, which writes a Feedback Event.

- Validate input (FastAPI normalizes and validates BEFORE anything else)

- Safety check (deterministic, runs BEFORE the engine, can short-circuit everything)

- Validate JSON output (Pydantic schema check on what the LLM returns, before it is stored)

"Frontend talks directly to Azure." This is wrong. The frontend never touches Azure. The backend is the only thing that talks to Azure. The backend is also the only thing that runs the safety check, validates input, and writes events. The frontend only talks to the backend.

For each card below: the left block is what you actually said on the quiz (or close to it). The right block is the precise version. Memorize the precise version. The difference between a partial answer and a full one is one specific phrase per topic.

Engine v0

The engine boundary / swap point

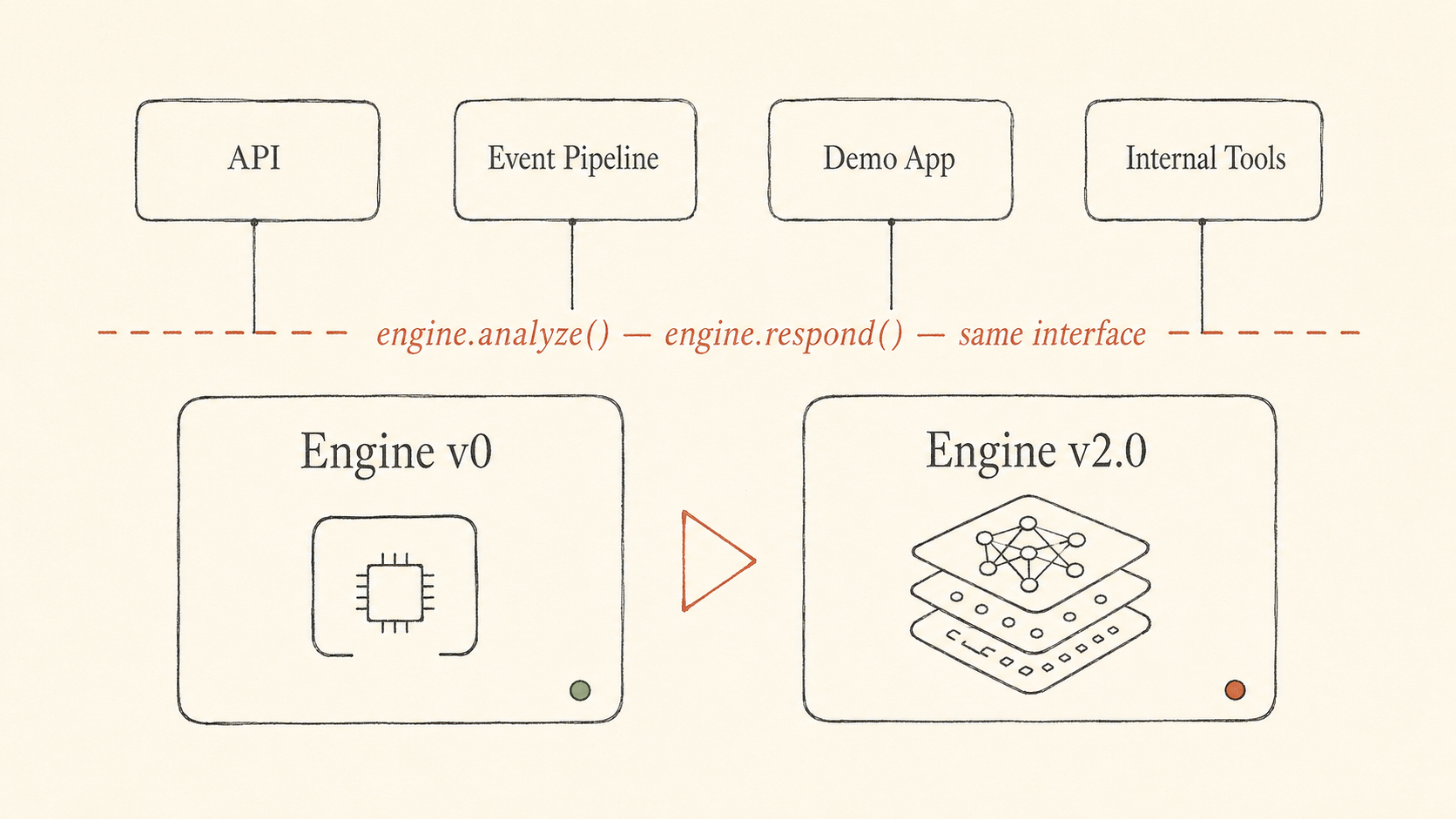

Figure 2Above the dashed line stays the same. Below the dashed line is the only thing that swaps.

Figure 2Above the dashed line stays the same. Below the dashed line is the only thing that swaps.

Internal Tools

Modular Backend / API Service

Event Schema and Data Pipeline

The Storage layer (3-box architecture)

Architecture lock (the first milestone)

Disposable vs permanent in Phase 1

Why Phase 1 matters strategically

What you tell investors about the demo

Read each question. Then read the answer aloud. Then close the page and try to say the answer from memory. Each answer below is the version that gets full credit. Memorize each one.

What is Engine v0, and why is it considered disposable?

Engine v0 is a thin wrapper around Azure OpenAI that outputs structured JSON emotional analysis. It produces real outputs for the investor demo, but it is disposable because it is not the proprietary emotional intelligence engine. Phase 2 replaces it with the real Engine v2.0 behind a stable interface, without changing anything else in the platform.

Explain the engine boundary and the swap point.

The engine sits behind a stable interface defined by two function signatures: engine.analyze(input) and engine.respond(input, analysis?). Everything else in the system, the API, the event writer, the demo app, the internal tools, talks to the engine through those signatures. Engine v0 implements those signatures now. Engine v2.0 implements the same signatures later. Swapping the engine does not require redesigning anything around it.

Define the 12 deliverables.

1. Investor Demo App — public-facing React app for live emotional-analysis demos to investors.

2. Internal Tools — admin web app with five sub-tools: debugger, dashboard, labeling, simulator, research playground.

3. Modular Backend / API Service — FastAPI backend that validates input, runs safety, calls Engine v0, writes events, and returns structured output.

4. Event Schema and Data Pipeline — canonical event envelope plus 7 event types used to store and link interactions.

5. Engine v0 — thin Azure OpenAI wrapper that produces structured emotional output, replaceable in Phase 2.

6. Safety Framework — deterministic crisis-keyword override that runs before the LLM and returns a pre-approved template if triggered.

7. Infrastructure — AWS, Docker, observability, retention, the cloud and deployment environment.

8. Developer Interface — OpenAPI spec plus Python shadow-mode reference for outside developers.

9. Documentation and Handover — runbooks, ADRs, engine replacement guide, the operating manual for taking over.

10. UI Design Pattern Selection — Pivot proposes three design tiles, Human Discovery picks one.

11. Prototype Evaluation Framework — synthetic corpus, baselines, investor-readable summary proving structured output beats simple sentiment.

12. External-LLM-Output Scoring — additive workstream that scores outputs from another AI using EAII's emotional analysis framework. Added April 28.

What is the difference between Investor Demo App and Internal Tools?

The Investor Demo App is the public-facing React app for live emotional-analysis demos to investors. The Internal Tools are an admin web app for the team, containing five sub-tools: debugger, dashboard, labeling, simulator, research playground. The Demo App is for outside audiences. The Internal Tools are for inside the team to debug, inspect, label, simulate, and iterate. Internal Tools are not for training the final model in Phase 1.

Explain the architecture in three boxes: Frontend, Backend, Storage.

Frontend: Demo App plus Admin Tools, both built in React.

Backend: FastAPI plus Engine v0, built in Python. The backend validates input, runs safety, calls the engine, and writes events.

Storage: events database (Postgres) plus cache (Valkey) plus async queue (RabbitMQ).

The whole system runs on AWS, but AWS is the cloud HOSTING environment, not the storage layer itself.

Walk through the request flow.

(1) User types in the React demo app. (2) Frontend sends a POST request to the backend. (3) FastAPI validates and normalizes the input. (4) Safety pipeline runs; if a crisis keyword fires, it short-circuits to a pre-approved template. (5) Backend calls engine.analyze() at the engine interface boundary. (6) Engine v0 builds a prompt and calls Azure OpenAI. (7) The LLM returns structured JSON: emotion, valence, intensity, tension, intent, response_strategy, conversation_dynamics, flags, confidence. (8) Engine v0 validates the JSON against the Pydantic schema; if invalid, fallback fires. (9) Backend writes events: Message Event, Analysis Event, Response Event. (10) Backend returns the structured analysis. (11) Demo app renders the visualization. (12) User can submit a Feedback Event.

Where does the Safety Framework run, and why?

The Safety Framework runs BEFORE the LLM call. It is a deterministic crisis-keyword override: it scans the user input for crisis patterns. If a pattern triggers, the system returns a pre-approved safety template and does not call the LLM. It exists so the system never lets an LLM improvise on crisis-pattern input.

Explain the Phase 1 timeline.

Five phases. Architecture lock: API payloads, event schema, UI wireframes (week 1). Foundation: backend, event store, Engine v0, safety, demo alpha (weeks 1 to 6). Internal tools: debugger, dashboard, labeling, simulator, playground (weeks 4 to 9). Hardening: QA, staging, production verification (weeks 10 to 12). Handover: documentation, ADRs, engine replacement guide (weeks 9 to 14).

Note: architecture lock is not just UI, it is API payloads plus event schema plus UI wireframes. And internal tools are not for training the model, they are for debugging, dashboards, labeling, simulation, and research.

Name the technologies from Tab 2 and what each is for.

React: both frontends. FastAPI / Python: backend service. PostgreSQL: event store. Valkey: cache. RabbitMQ: async queue. Azure OpenAI: LLM provider behind Engine v0. AWS: cloud hosting. Docker plus Docker Compose: local development. GitHub Actions: CI/CD. OpenTofu: infrastructure-as-code.

(Don't confuse deliverables with technologies. Investor Demo App is a deliverable. React is the technology it is built with.)

Why does Phase 1 matter strategically if the real engine is not built yet?

Phase 1 produces two strategic assets:

(1) A working investor-facing demo that lets Patrick show structured emotional understanding live to investors during fundraising.

(2) A locked architecture, including API, schema, event pipeline, internal tools, that Phase 2 plugs into without redesign.

Demo for fundraising. Architecture for Phase 2.

Finish the investor explanation.

"This demo is not claiming to be the final emotional intelligence engine. It is showing the product architecture, user experience, structured emotional output, API workflow, and proof of concept that the real engine will later plug into."

(Don't say "how it will perform once functioning." Engine v0 does not prove final performance. It proves the shape of the product and platform.)

Which parts are disposable vs permanent?

Disposable: Engine v0. That is it.

Durable: API, schema, event pipeline, demo app, internal tools, infrastructure, developer interface, documentation and handover. Everything except Engine v0.

The whole point of Phase 1 is that the placeholder engine gets thrown away. The platform around it does not.

What should you be able to explain before moving to Tab 3?

The readiness checklist. You should be able to explain:

(1) Each of the 12 deliverables. (2) The request flow end to end. (3) The engine boundary and Phase 2 swap. (4) The taxonomy shape (the structured emotional state fields). (5) The 7 event types and how they link. (6) Why safety runs before the engine. (7) The tech stack and why three components were swapped (Mongo to Postgres, Redis to Valkey, Terraform to OpenTofu). (8) The likely technical questions David might ask and how you would answer them.

This is the night-before review. Five minutes, five facts. After Tab 4 is memorized, this is your last pass before retesting.

The 5-minute cram

The big picture

- EAII is an emotional infrastructure layer for AI systems.

- Phase 1 = the platform around the engine (Pivot is building this).

- Phase 2 = the real engine (your domain, after Phase 1 ends).

- Engine v0 = thin Azure OpenAI wrapper, disposable, replaced in Phase 2.

- The engine boundary = stable function signatures (

engine.analyze,engine.respond). Same signatures in v0 and v2.0. Everything else stays the same when you swap.

The 12 deliverables

- Investor Demo App

- Internal Tools (debugger, dashboard, labeling, simulator, research playground)

- Modular Backend / API Service (FastAPI)

- Event Schema and Data Pipeline (canonical envelope, 7 event types)

- Engine v0 (thin Azure OpenAI wrapper)

- Safety Framework (deterministic, pre-engine, crisis-keyword override)

- Infrastructure (AWS, Docker, observability, retention)

- Developer Interface (OpenAPI spec, Python shadow-mode reference)

- Documentation and Handover (runbooks, ADRs, replacement guide)

- UI Design Pattern Selection (3 design tiles, you pick one)

- Prototype Evaluation Framework (synthetic corpus, baselines, investor summary)

- External-LLM-Output Scoring (added April 28)

The 10 technologies (these are not deliverables)

- React (frontends), FastAPI / Python (backend)

- PostgreSQL (events), Valkey (cache), RabbitMQ (queue)

- Azure OpenAI (LLM provider behind v0)

- AWS (cloud hosting)

- Docker plus Compose (local dev), GitHub Actions (CI/CD), OpenTofu (IaC)

The 3-box architecture

- Frontend: Demo App + Admin Tools, in React

- Backend: FastAPI + Engine v0, in Python

- Storage: events DB (Postgres) + cache (Valkey) + async queue (RabbitMQ)

- (AWS is the cloud the whole thing runs on, NOT the storage layer.)

The request flow

Frontend → FastAPI → validate → safety → engine.analyze() → Azure OpenAI → validate JSON → write events → return → render → feedback.

Frontend never talks to Azure directly. Backend is the only thing that does.

Safety

Runs before the engine. Deterministic crisis-keyword override. Returns a pre-approved template. Never lets the LLM improvise on crisis input.

Disposable vs durable

Only Engine v0 is disposable. Everything else (API, schema, event pipeline, demo app, internal tools, infrastructure, developer interface, documentation) is the durable asset.

Why Phase 1 matters

Two assets: demo for fundraising, and locked architecture for Phase 2.

The investor pitch (do not say "how it performs")

This demo is not the final emotional intelligence engine. It is showing the product architecture, user experience, structured emotional output, API workflow, and proof of concept that the real engine will later plug into.

The three stack swaps (technical, not legal)

MongoDB to PostgreSQL with JSONB. Redis to Valkey. Terraform to OpenTofu. All drop-in equivalents.

Figure 3The night before. The work is done. Now read it once more.

Figure 3The night before. The work is done. Now read it once more.

End of guide. Now go drill it. Read aloud. Repeat. Then retake.